Re: In my humble opinion

junko.yoshida 7/8/2016 11:16:06 AM

Olaf, that's a good point. No, owners don't read thick manuals anymore. Everyone expects

"out of the box" experience. If you had to look it up in the manual or searching answers in

wikihow, yeah, that's definitely a turn off.

What actually bothers me about this case is that the whole Tesla "fanboy" community really

encouraged reckless driving behaviors -- i.e. using ADAS system as "autonomous" hands-

free driving. Posting their own "look ma, no hands" video clip on YouTube, enthralled by

the number of clicks it gets. On top of that, he gets "retweet" from Elon Musk, which makes

the driver feel as though he can die in heaven.

You know how the circular nature of social media goes. Everyone follows what everyone

else seems to be doing, and kids himself that it's OK to drive his Tesla hands-free.

Calling ADAS as "Autopilot" is the biggest misnomer of this decade in my humble opinion.

Copyright © 2016 UBM All Rights Reserved

----------------------------------------------

Re: good article

junko.yoshida 7/8/2016 11:03:28 AM

Eric, thanks for chiming in. This msg coming from you -- really one of the pioneers of CMOS

image sensors -- means a lot to us.

Yes, I am sure that Tesla and all the technologies suppliers have a better handle of what

went wrong. But you raise a good question here.

If you, as a driver, are blinded by the sun, you slow down -- rather than telling yourself,

"I can't classify what's in front of me."

The car manufacturer -- and tech suppliers, too -- must do a much better job in spelling out

the limitations of their products.

The public at this day and age tends to be too trusting of technologies

.

Copyright © 2016 UBM All Rights Reserved

----------------------------------------------

Re: Truck safety regulations

sixscrews 7/7/2016 7:41:00 PM

Trucks do have rear crash barriers but not side barriers although I have seen more and

more trailers with side airfoils intended to reduce aerodynamic drag - reinforcing these as

crash barriers would be a no-brainer. On the other hand, no matter what kind of barrier you

have a 65 mph impact is likely to be lethal or at least result in serious injury to the front seat

occupants.

I appreciate the endorsement of certification of automitive software. Carmakers and others

will carry on about gov't interference but if you had a chance to read some of the code in

the Toyota TCM it would make your eyes spin - sphagetti code doesn't even begin to

describe it. I wrote better code (I think) back in 1978 when we were using punch cards.

There are lots of excellent software engineers and managers who know how to get them to

produce excellent code - all it takes is for the auto industry to get smart and hire them.

But they are cheap and dumb, IMHO, and more focused on marketing than product

development (with some exceptions - Ford's focus on turbochargers is impressive but I

have no idea what their software looks like).

And then there is Volkswagen's diesel emission cheat software - you can do all kind of

things in code provided nobody but your supervisors look at it.

wb/ss

Copyright © 2016 UBM All Rights Reserved

----------------------------------------------

Re: Who is responsible for the accident?

sixscrews 7/7/2016 4:47:40 PM

Your comments are dead on - what was going on here?

However, if an autopilot system cannot compensate for stupid

drivers of other vehicles on the same roadway then it's worse

than useless - it's a loaded weapon pointed straight at the

head of the vehicle operator - whose primary responsibility is

to keep aware of the situation.

So, if Tesla is marketing this as a 'play your DVD and let us do

the driving' then they are worse than fools - they are setting

their clients up for fatal crashes.

At this time there is no software that can anticipate all the

situations that will occur on a roadway and anyone who claims

otherwise is ignorant of the state of software circa 2016.

And this brings me to one of my favoride dead horses – the

NHTSA has no authority to certify vehicular control software

and this allows manufacturers to release software the is

developed by a bunch of amatuers with no idea of the

complexities of real time control systems (see Toyota throttle

control issues revealed in the Oklahoma case 18 months ago).

Congress - or whatever group of clowns that masquerade as

Congress these days - must implement - or allow NHTSA or

another agency- to create set of regulations similar to those

applying to aircraft flight control systems. A vehicle operating

in a 2-D environment is just as dangerous as an aircraft

operating in a 3-D environment and any software associted

with either one should be subject to the same rules.

Automakers are behaving as if the vehicles they manufacture

are still running on a mechanical distributor-points-condensor-

coil system with a driver controlling manifold pressue via the

'gas pedal' as was true in 1925. They fought air bags,

collapasible steering columns, safety glass and dozens of

other systems from the '20s to the present. Enough is enough.

Mine operators who intentionally dodge safety rules spend

time behind bars (or maybe - provided the appeals courts

aren't controlled by their friends). So why shouldn't

automakers be subject to the same penanties? Kill your

customer - go to prison, do not pass GO, do not collect $200.

The engineering of automobiles is not a game of lowest bidder

or chepest engineer; given the cost of vehicles today it should

be the primary focus of company executives, not something to

be passed off as an annoyance and cost center with less

improtance than marketing.

It's time for automakers to step up and take responsibility for

the systems they manufacture and live with certification of the

systems that determine life or death for thier customers.

wb/ss

Copyright © 2016 UBM All Rights Reserved

===============================

Tesla Crashes BMW-Mobileye-Intel

Event

Recent auto-pilot fatality casts pall

Junko Yoshida, Chief International

Correspondent

7/1/2016 05:32 PM EDT

---------------------------------------------

(selected comment - by the article author):

Re: Need information

junko.yoshida 7/2/2016 7:18:45 AM

@Bert22306 thanks for your post.

I haven't had time to do a fully story but here's what we know

now:

You asked:

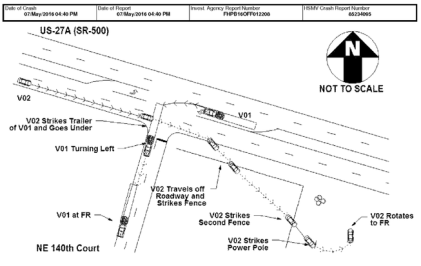

1. So all I really want to know is, why did multiple redundant

radar and optical sensors not see the broad side of a

trailer?

Tesla Model S doesn't come with "multiple redundant radar

and optical sensors." It has one front radar, one front

monocular Mobileye optical camera, and a 360-degree set of

ultrasonic sensors.

In contrast, similar class cars like Mercedez Benz comes with

a lot more sensing hardware devices including short-range

radar, multi-mode radar, stereo optical camera in addition to

what Tesla model S has.

But that's besides the point. More sensing devices obviously

help but how each carmaker is doing sensor fusion and what

sort of algorithms are at work are not known to us. There are

no good yardsticks available to compare different algorithms

against one another.

2. Must have been a gymongous radar target, no?

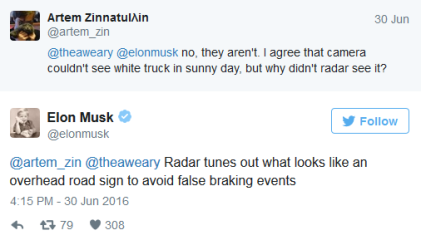

Yes, the truck was a huge radar target. But according to Tesla's

statement, "The high, white side of the box truck, combined

with a radar signature that would have looked very similar to

an overhead sign, caused automatic braking not to fire."

In other wrods, Tesla's autopilot system believed the truck was

an overhead sign that the car could pass beneath without

problems.

Go figure.

3. Why the driver didn't notice is easily explained.

There have been multiple reports that the police found a DVD

player inside the Tesla driver's car. There have been

suggestions -- but it isn't confirmed -- that the driver might have

been watching a film when the crash happened. But again,

we don't know this for sure.

4. Even though this was supposed to be just assisted

driving, one might assume that the driver was distracted

You would think. It's important to note, however, that this driver

killed in Tesla autopoiot carsh has been known to be a big

Tesla fan, and he posted a number of a viral video on his

Model S.

Earlier this year, he posted one video clip showing his Tesla

avoiding a close call accidet with autopilot engaged. (Which

obviously got an attention and a tweet back from Elon Musk...)

Here's the thing. Yes, what autopilot did in that video is cool,

but it's high time for Musk to start tweeting the real limitations

of his autopilot system.

5. Saying things like "We need standards" is so peripheral!

I beg to differ, Bert.

As we try to sort out what exactly happened in the latest Tesla

crash, we realize that there isn't a whole lot of information

publicly available.

Everything Google, Tesla and others do today remain private,

we have no standards to compare them against. The

autonomous car industry is still living in the dark age of siloed

commuity.

I wrote this blog because I found interesting what Mobileye's

CTO had to say in the BMW/Mobileye/Intel press conference

Friday morning. There were a lot of good nuggets there.

But one of the things that struck me is this: whether you like it

or not, auto companies must live with regulations. And

"regulators need to see the standard emerging," as he put it.

As each vendor develops its own set of sensor fusing, own set

of software stack, etc., one can only imagine complexities

multiplying in standards for testing and verifying autonomous

cars.

Standards are not peripheral. They are vital.

Copyright © 2016 UBM All Rights Reserved

===============================